HomebrewedDB: RGB-D Dataset for 6D Pose Estimation of 3D Objects

Roman Kaskman, Sergey Zakharov, Ivan Shugurov, Slobodan Ilic

- ✔ Reconstructed 3D models of high quality.

- ✔ Validation & test sequences for 13 scenes.

- ✔ Ground truth pose annotations for each object in the scene.

- ✔ RGB and Depth images captured with PrimeSense Carmine and Kinect 2.

- ✔ Scalability, light and texture change benchmarks.

Description

We present a dataset for 6D pose estimation that covers the above-mentioned challenges, mainly targeting training from 3D models (both textured and textureless), scalability, occlusions, and changes in light conditions and object appearance. The dataset features 33 objects (17 toy, 8 household and 8 industry-relevant objects) over 13 scenes of various difficulty. We also present a set of benchmarks to test various desired detector properties, particularly focusing on scalability with respect to the number of objects and resistance to changing light conditions, occlusions and clutter. We also set a baseline for the presented benchmarks using a state-of-the-art DPOD detector. Considering the difficulty of making such datasets, we plan to release the code allowing other researchers to extend this dataset or make their own datasets in the future.

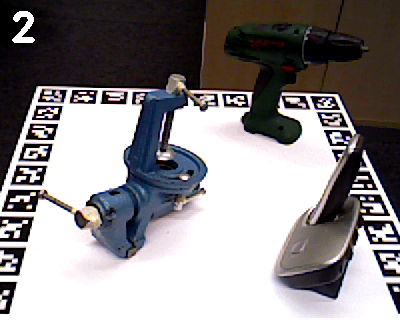

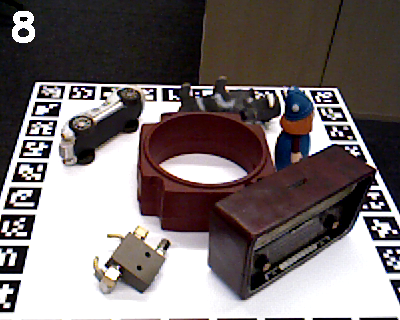

Objects

The dataset feature 33 objects of different purpose (toys, household objects, industrial objects). The reconstructed 3D models are stored as .ply files with associated per-vertex colors. The models are stored in millimeters, with surface normal vectors already precomputed.

Validation and test sequences

The dataset features 13 scenes of varying complexity. Each scene was captured with two sensors: PrimeSense Carmine and Kinect 2. For each scene there are 340 validation RGBD frames captured on a rotating turntable and 1000 test RGBD frames captured in a handheld mode. The pose labels are provided for each of the objects in each frame.

License

This data set is released under Creative Comon Zero License.Download

The dataset is hosted on BOP challenge web server and is composed of two versions:

- smaller subset used in BOP 2019 challenge.

- full dataset including both Primesense and Microsoft Kinect2 sequences. Kinect2 images are higher resolution resulting in larger size of the dataset, but better quality of RGB images. Note that the automated evaluation the images below is still not available, but will be soon. Check BOP result submission page.

CODE

The source code used for preparing the dataset has been released on

siemens github and

it is open source. It supports data acquisition and has interfaces

to Primesense, Kinect2, Kinect Azure and Intel Realsense cameras, as

well as interface for loading images previously saved to the hard

drive. Additionally it allows estimation and refinement of 6D poses

of 3D objects that has to be available beforehand as CAD models or

3D reconstructed models. We used ARTEC

Eva commercial scanner for creation of 3D models for this

dataset. The initial 6D pose estimation is based on PPF method of B.

Drost and S. Ilic, Model globally, match locally: Efficient and

robust 3D object recognition, CVPR 2010,

that is still one of the best performing methods for

on BOP 6D pose

estimation leader board. For that we used implementation from

HALCON that is

either commercially available or free for educational purposes

(check licenses on github repo of our open source).

Acknowledgements

Contact

For questions, concerns and general feedback, please contact Slobodan Ilic.