SensorVisScientific Director: Gudrun KlinkerContact Person(s): Marcus Tönnis In industrial collaboration with: ZT-3, BMW Forschung & Technik GmbH |

Abstract

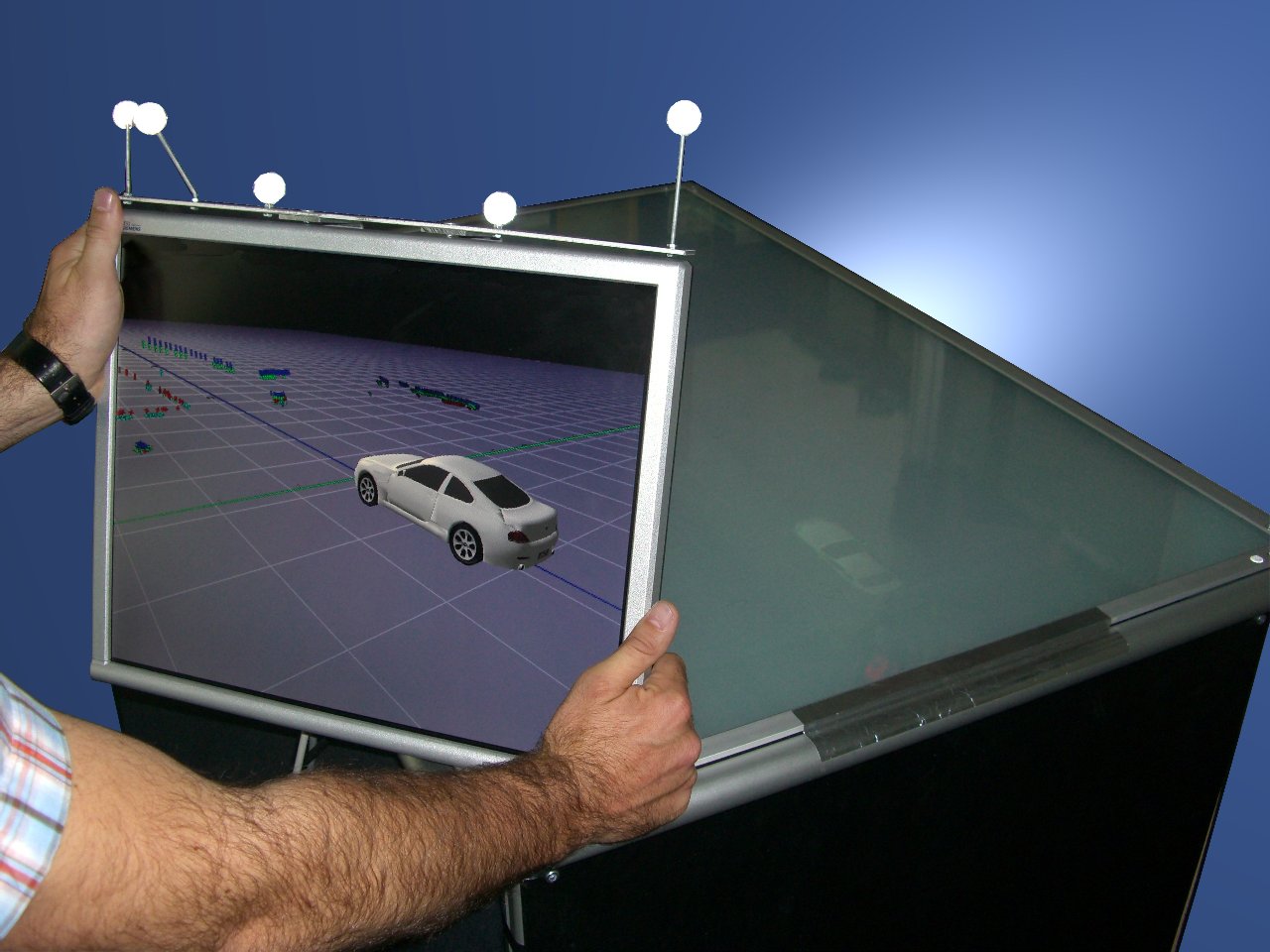

Modern cars are equipped with an increasing number of sensors perceiving the environment especially towards the area in front of a vehicle. Fusing such sensor data and further analysis to detect other traffic participants is expected to help driver assistance systems increase driver safety.For the development of such multi-sensor systems and driver assistance systems, it is necessary to visualize representations of all levels of such data, starting with raw data from each single sensor up to fused data and interpreted contextual data. Such visualization is necessary for debugging purposes during the development process of perception systems. They will also become invaluable as cars with increasing sensoric functionality are introduced into market and (re)calibration becomes part of the daily production and maintenance routine since the correct operation of the sensors has to be evaluated or maintained on a regular basis. Visualization of sensor data also can bridge the gap between researchers in sensorics and in HMI presentation concepts, thus leading to new, preferably visual interaction schemes in safety assistance systems.

Such future assistance systems might use large scale Head-Up Display (HUD) technology to place information in the drivers field of view. HUDs and sensory systems combined allow for development of presentation schemes based on the fact that spatially embedded information does not require the driver to look off-road, for instance onto the dashboard. The focus of analysis then relies in finding minimally distractive presentation schemes. Such can be warning symbols superimposed on for example, obstacles, as well as awareness guidance systems for objects that are not in the drivers view [12]. Augmented Reality (AR) could allow for ergonomic testing of those presentation schemes before large scale Head-Up Displays are available for real in-car environments. Thus an accelerated technology transfer is obtained, since parallel research on technology and human factors is enabled. Humancomputer interaction researchers can then experience and evaluate any kind of presentation scheme long before it is fully technically mature. Their results can be used as input for research in technological issues by communicating where to focus research.

Pictures

Publications

| 2007 | |

| M. Tönnis, R. Lindl, L. Walchshäusl, G. Klinker

Visualization of Spatial Sensor Data in the Context of Automotive Environment Perception Systems The Sixth IEEE and ACM International Symposium on Mixed and Augmented Reality, Nara, Japan, Nov. 13 - 16, 2007, pp. 115-124. (bib) |

|

Team

Contact Person(s)

|

Working Group

|

|

|

|

Location

| Technische Universität München Institut für Informatik / I16 Boltzmannstr. 3 85748 Garching bei München Tel.: +49 89 289-17058 Fax: +49 89 289-17059 |

internal project page

Please contact Marcus Tönnis for available student projects within this research project.