| 2010 |

|

L. Wang, J. Traub, S. Weidert, S.M. Heining, E. Euler, N. Navab

Parallax-Free Intra-Operative X-ray Image Stitching

the MICCAI 2009 special issue of the Journal Medical Image Analysis

(bib)

|

|

M. Wieczorek, A. Aichert, O. Kutter, C. Bichlmeier, J. Landes, S.M. Heining, E. Euler, N. Navab

GPU-accelerated Rendering for Medical Augmented Reality in Minimally-Invasive Procedures

Proceedings of Bildverarbeitung fuer die Medizin (BVM 2010), Aachen, Germany, March 14-16 2010

(bib)

|

|

P. Dressel, L. Wang, O. Kutter, J. Traub, S.M. Heining, N. Navab

Intraoperative positioning of mobile C-arms using artificial fluoroscopy

SPIE Medical Imaging, San Diego, California, USA, February 2010

(bib)

|

| 2009 |

|

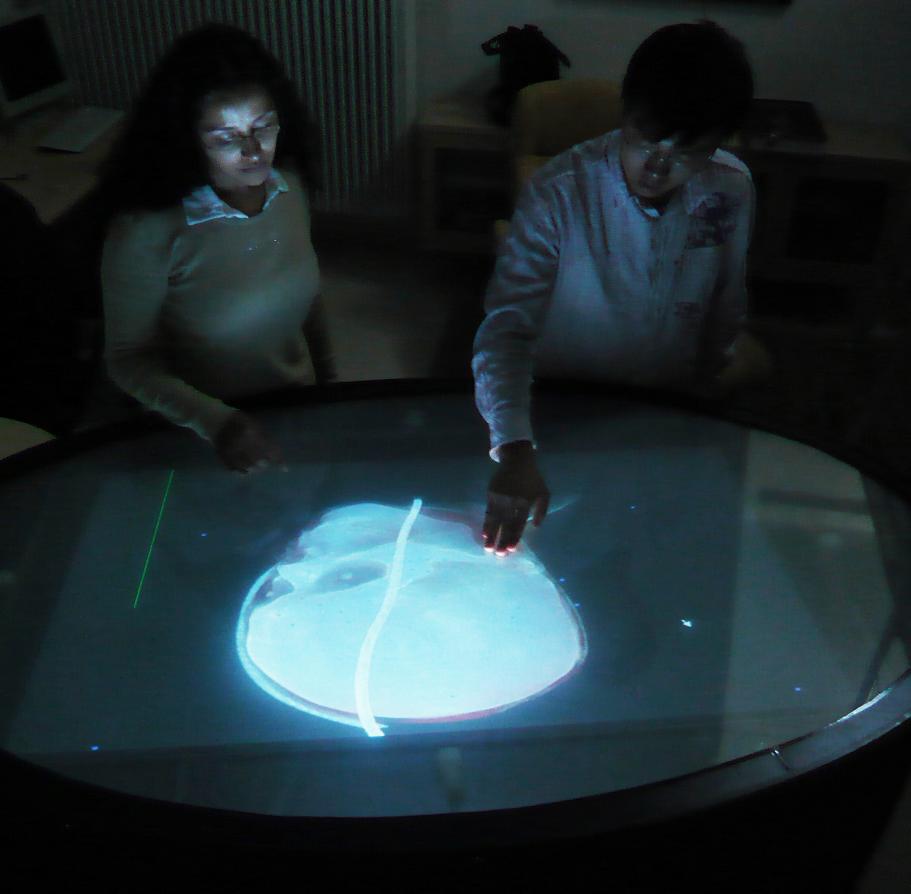

C. Bichlmeier, S.M. Heining, L. Omary, P. Stefan, B. Ockert, E. Euler, N. Navab

MeTaTop: A Multi-Sensory and Multi-User Interface for Collaborative Analysis of Medical Imaging Data

Interactive Demo (ITS 2009), Banff, Canada, November 2009

(bib)

|

|

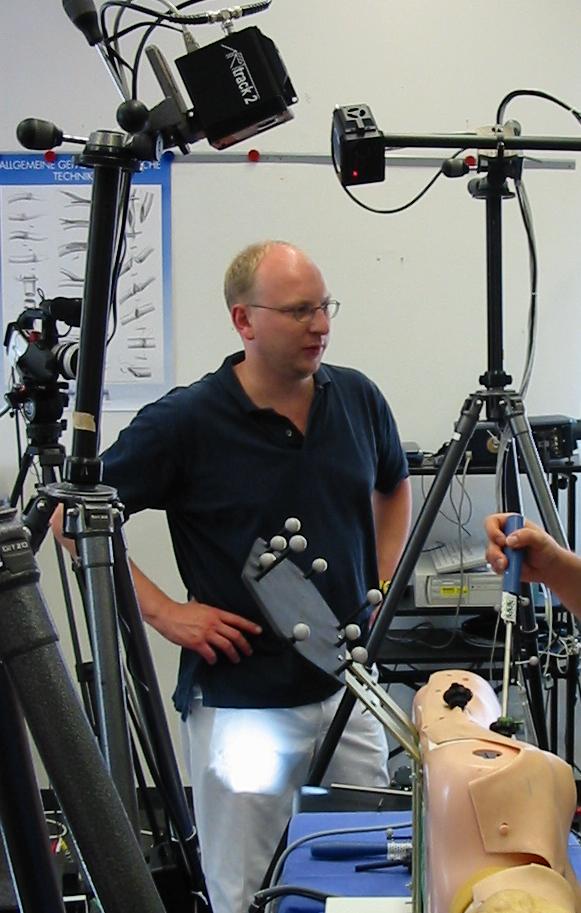

T. Blum, S.M. Heining, O. Kutter, N. Navab

Advanced Training Methods using an Augmented Reality Ultrasound Simulator

8th IEEE and ACM International Symposium on Mixed and Augmented Reality (ISMAR 2009), Orlando, USA, October 2009, pp. 177-178. The original publication is available online at ieee.org.

(bib)

|

|

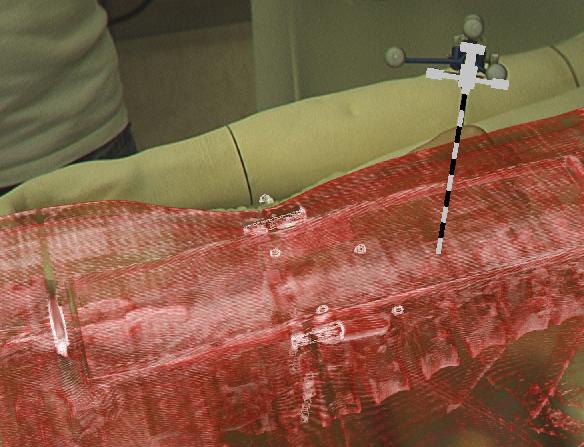

C. Bichlmeier, S. Holdstock, S.M. Heining, S. Weidert, E. Euler, O. Kutter, N. Navab

Contextual In-Situ Visualization for Port Placement in Keyhole Surgery: Evaluation of Three Target Applications by Two Surgeons and Eighteen Medical Trainees

The 8th IEEE and ACM International Symposium on Mixed and Augmented Reality, Orlando, US, Oct. 19 - 22, 2009.

(bib)

|

|

C. Bichlmeier, M. Kipot, S. Holdstock, S.M. Heining, E. Euler, N. Navab

A Practical Approach for Intraoperative Contextual In-Situ Visualization

International Workshop on Augmented environments for Medical Imaging including Augmented Reality in Computer-aided Surgery (AMI-ARCS 2009), London, UK, September 2009

(bib)

|

|

A. Ahmadi, N. Padoy, K. Rybachuk, H. Feußner, S.M. Heining, N. Navab

Motif Discovery in OR Sensor Data with Application to Surgical Workflow Analysis and Activity Detection

MICCAI Workshop on Modeling and Monitoring of Computer Assisted Interventions (M2CAI), London, UK, September 2009

(bib)

|

|

C. Bichlmeier, S.M. Heining, M. Feuerstein, N. Navab

The Virtual Mirror: A New Interaction Paradigm for Augmented Reality Environments

IEEE Trans. Med. Imag., vol. 28, no. 9, pp. 1498-1510, September 2009

(bib)

|

|

L. Wang, J. Traub, S. Weidert, S.M. Heining, E. Euler, N. Navab

Parallax-free Long Bone X-ray Image Stitching

Medical Image Computing and Computer-Assisted Intervention (MICCAI), London, UK, September 20-24 2009

(bib)

|

|

B. Ockert, C. Bichlmeier, S.M. Heining, O. Kutter, N. Navab, E. Euler

Development of an Augmented Reality (AR) training environment for orthopedic surgery procedures

Proceedings of The 9th Computer Assisted Orthopaedic Surgery (CAOS 2009), Boston, USA, June, 2009

(bib)

|

|

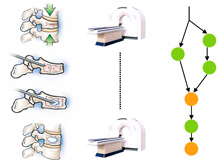

N. Navab, S.M. Heining, J. Traub

Camera Augmented Mobile C-arm (CAMC): Calibration, Accuracy Study and Clinical Applications

IEEE Transactions Medical Imaging, 29 (7), 1412-1423

(bib)

|

|

L. Wang, J. Traub, S.M. Heining, S. Benhimane, R. Graumann, E. Euler, N. Navab

Long Bone X-ray Image Stitching using C-arm Motion Estimation

Proceedings of Bildverarbeitung fuer die Medizin (BVM 2009), Heidelberg, Germany, March 22-24 2009

(bib)

|

|

L. Wang, S. Weidert, J. Traub, S.M. Heining, C. Riquarts, E. Euler, N. Navab

Camera Augmented Mobile C-arm: Towards Real Patient Study

Proceedings of Bildverarbeitung fuer die Medizin (BVM 2009), Heidelberg, Germany, March 22-24 2009

(bib)

|

| 2008 |

|

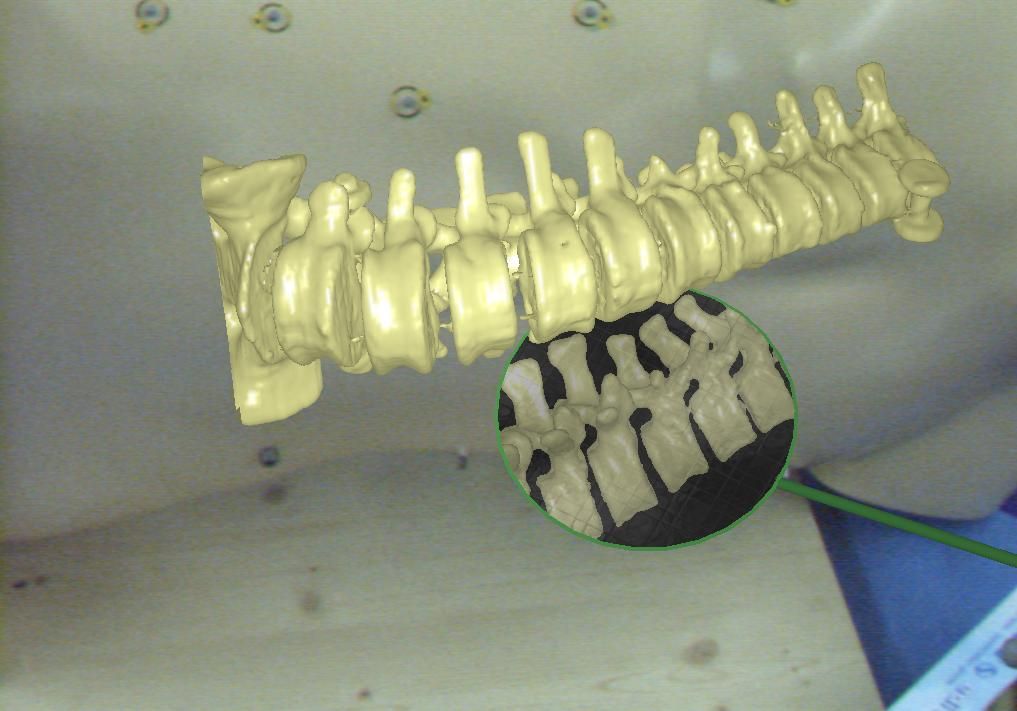

C. Bichlmeier, B. Ockert, S.M. Heining, A. Ahmadi, N. Navab

Stepping into the Operating Theater: ARAV - Augmented Reality Aided Vertebroplasty

The 7th IEEE and ACM International Symposium on Mixed and Augmented Reality, Cambridge, UK, Sept. 15 - 18, 2008.

(bib)

|

|

J. Traub, T. Sielhorst, S.M. Heining, N. Navab

Advanced Display and Visualization Concepts for Image Guided Surgery

IEEE/OSA Journal of Display Technology; Special Issue on Medical Displays, Volume 4, Issue 4, Dec. 2008

(bib)

|

|

O. Kutter, A. Aichert, C. Bichlmeier, J. Traub, S.M. Heining, B. Ockert, E. Euler, N. Navab

Real-time Volume Rendering for High Quality Visualization in Augmented Reality

International Workshop on Augmented environments for Medical Imaging including Augmented Reality in Computer-aided Surgery (AMI-ARCS 2008), USA, New York, September 2008

(bib)

|

|

C. Bichlmeier, B. Ockert, O. Kutter, M. Rustaee, S.M. Heining, N. Navab

The Visible Korean Human Phantom: Realistic Test & Development Environments for Medical Augmented Reality

International Workshop on Augmented environments for Medical Imaging including Augmented Reality in Computer-aided Surgery (AMI-ARCS 2008), USA, New York, September 2008

(bib)

|

|

A. Ahmadi, N. Padoy, S.M. Heining, H. Feußner, M. Daumer, N. Navab

Introducing Wearable Accelerometers in the Surgery Room for Activity Detection

7. Jahrestagung der Deutschen Gesellschaft f{\"u}r Computer-und Roboter-Assistierte Chirurgie (CURAC 2008)

(bib)

|

|

J. Traub, A. Ahmadi, N. Padoy, L. Wang, S.M. Heining, E. Euler, P. Jannin, N. Navab

Workflow Based Assessment of the Camera Augmented Mobile C-arm System

International Workshop on Augmented Reality environments for Medical Imaging and Computer-aided Surgery (AMI-ARCS 2008), New York, NY, USA, September 2008

(bib)

|

|

L. Wang, J. Traub, S.M. Heining, S. Benhimane, R. Graumann, E. Euler, N. Navab

Long Bone X-ray Image Stitching Using Camera Augmented Mobile C-arm

Medical Image Computing and Computer-Assisted Intervention, MICCAI, 2008, New York, USA, September 6-10 2008

(bib)

|

|

F. Wimmer, C. Bichlmeier, S.M. Heining, N. Navab

Creating a Vision Channel for Observing Deep-Seated Anatomy in Medical Augmented Reality

Proceedings of Bildverarbeitung fuer die Medizin (BVM 2008), Munich, Germany, April 2008

(bib)

|

|

J. Traub, S.M. Heining, E. Euler, N. Navab

Two camera augmented mobile C-arm System setup and first experiments

Proceedings of The 8th Computer Assisted Orthopaedic Surgery (CAOS 2008), Hong Kong, China, June, 2008

(bib)

|

|

S.M. Heining, C. Bichlmeier, E. Euler, N. Navab

Smart Device: Virtually Extended Surgical Drill

Proceedings of The 8th Computer Assisted Orthopaedic Surgery (CAOS 2008), Hong Kong, China, June, 2008

(bib)

|

|

M. Feuerstein, T. Mussack, S.M. Heining, N. Navab

Intraoperative Laparoscope Augmentation for Port Placement and Resection Planning in Minimally Invasive Liver Resection

IEEE Trans. Med. Imag., vol. 27, no. 3, pp. 355-369, March 2008

(bib)

|

| 2007 |

|

C. Bichlmeier, S.M. Heining, M. Rustaee, N. Navab

Laparoscopic Virtual Mirror for Understanding Vessel Structure: Evaluation Study by Twelve Surgeons

The Sixth IEEE and ACM International Symposium on Mixed and Augmented Reality, Nara, Japan, Nov. 13 - 16, 2007.

(bib)

|

|

C. Bichlmeier, F. Wimmer, S.M. Heining, N. Navab

Contextual Anatomic Mimesis: Hybrid In-Situ Visualization Method for Improving Multi-Sensory Depth Perception in Medical Augmented Reality

The Sixth IEEE and ACM International Symposium on Mixed and Augmented Reality, Nara, Japan, Nov. 13 - 16, 2007.

(bib)

|

|

J. Traub, H. Heibel, P. Dressel, S.M. Heining, R. Graumann, N. Navab

A Multi-View Opto-Xray Imaging System: Development and First Application in Trauma Surgery

Proceedings of Medical Image Computing and Computer-Assisted Intervention (MICCAI 2007), Brisbane, Australia, October/November 2007.

(bib)

|

|

C. Bichlmeier, M. Rustaee, S.M. Heining, N. Navab

Virtually Extended Surgical Drilling Device: Virtual Mirror for Navigated Spine Surgery

Proceedings of Medical Image Computing and Computer-Assisted Intervention (MICCAI 2007), Brisbane, Australia, October/November 2007.

(bib)

|

|

M. Feuerstein, T. Mussack, S.M. Heining, N. Navab

Registration-free Laparoscope Superimposition for Intra-Operative Planning of Liver Resection

3rd Russian-Bavarian Conference on Biomedical Engineering, Erlangen, Germany, July 2/3 2007

(bib)

|

|

P. Stefan, J. Traub, S.M. Heining, C. Riquarts, T. Sielhorst, E. Euler, N. Navab

Hybrid navigation interface: a comparative study

Proceedings of Bildverarbeitung fuer die Medizin (BVM 2007), Munich, Germany, March 2007, pp. 81-86

(bib)

|

|

T. Klein, S. Benhimane, J. Traub, S.M. Heining, E. Euler, N. Navab

Interactive Guidance System for C-arm Repositioning without Radiation

Proceedings of Bildverarbeitung fuer die Medizin (BVM 2007), Munich, Germany, March 2007, pp. 21-25

(bib)

|

|

C. Bichlmeier, T. Sielhorst, S.M. Heining, N. Navab

Improving Depth Perception in Medical AR: A Virtual Vision Panel to the Inside of the Patient

Proceedings of Bildverarbeitung fuer die Medizin (BVM 2007), Munich, Germany, March 2007

(bib)

|

|

M. Feuerstein, T. Mussack, S.M. Heining, N. Navab

Registration-Free Laparoscope Augmentation for Intra-Operative Liver Resection Planning

SPIE Medical Imaging, San Diego, California, USA, 17-22 February 2007

(bib)

|

| 2006 |

|

N. Navab, S. Wiesner, S. Benhimane, E. Euler, S.M. Heining

Visual Servoing for Intraoperative Positioning and Repositioning of Mobile C-arms

Proceedings of Medical Image Computing and Computer-Assisted Intervention (MICCAI 2006), Copenhagen, Denmark, October 2006

(bib)

|

|

J. Traub, P. Stefan, S.M. Heining, T. Sielhorst, C. Riquarts, E. Euler, N. Navab

Hybrid navigation interface for orthopedic and trauma surgery

Proceedings of Medical Image Computing and Computer-Assisted Intervention (MICCAI 2006), Copenhagen, Denmark, October 2006, pp. 373-380

(bib)

|

|

T. Sielhorst, C. Bichlmeier, S.M. Heining, N. Navab

Depth perception a major issue in medical AR: Evaluation study by twenty surgeons

Proceedings of Medical Image Computing and Computer-Assisted Intervention (MICCAI 2006), Copenhagen, Denmark, October 2006, pp. 364-372

The original publication is available online at www.springerlink.com

(bib)

|

|

J. Traub, P. Stefan, S.M. Heining, T. Sielhorst, C. Riquarts, E. Euler, N. Navab

Towards a Hybrid Navigation Interface: Comparison of a Slice Based Navigation System with In-situ Visualization

Proceedings of International Workshop on Medical Imaging and Augmented Reality (MIAR 2006), Shanghai, China, August, 2006, pp.179-186

(bib)

|

|

S.M. Heining, P. Stefan, L. Omary, S. Wiesner, T. Sielhorst, N. Navab, F. Sauer, E. Euler, W. Mutschler, J. Traub

Evaluation of an in-situ visualization system for navigated trauma surgery

Journal of Biomechanics 2006; Vol. 39 Suppl. 1, page 209

(bib)

|

|

S.M. Heining, S. Wiesner, E. Euler, N. Navab

CAMC (camera augmented mobile c-arm) - first clinical application in a cadaver study

Journal of Biomechanics 2006; Vol. 39 Suppl. 1, page 210

(bib)

|

|

J. Traub, P. Stefan, S.M. Heining, T. Sielhorst, C. Riquarts, E. Euler, N. Navab

Stereoscopic augmented reality navigation for trauma surgery: cadaver experiment and usability study

International Journal of Computer Assisted Radiology and Surgery, 2006; Vol. 1 Suppl. 1, page 30 - 31. The original publication is available online at www.springerlink.com

(bib)

|

|

S.M. Heining, S. Wiesner, E. Euler, N. Navab

Pedicle screw placement under video-augmented fluoroscopic control. First clinical application in a cadaver study

International Journal of Computer Assisted Radiology and Surgery, 2006; Vol. 1 Suppl. 1, page 189-190. The original publication is available online at www.springerlink.com

(bib)

|

|

S.M. Heining, P. Stefan, F. Sauer, E. Euler, N. Navab, J. Traub

Evaluation of an in-situ visualization system for navigated trauma surgery

Proceedings of The 6th Computer Assisted Orthopaedic Surgery (CAOS 2006), Montreal, Canada, June, 2006

(bib)

|

|

S.M. Heining, S. Wiesner, E. Euler, W. Mutschler, N. Navab

Locking of intramedullary nails under video-augmented flouroscopic control: first clinical application in a cadaver study

Proceedings of The 6th Computer Assisted Orthopaedic Surgery (CAOS 2006), Montreal, Canada, June, 2006

(bib)

|