Perception for humanoid robotics

We offer Master theses/Guided Research topics in the field of computer vision and robotics, in collaboration with the

Chair of Applied Mechanics.

The project topics concern the application of computer vision and 3D perception techniques to humanoid robotics. In particular, they focus on one of the following two tasks:

|

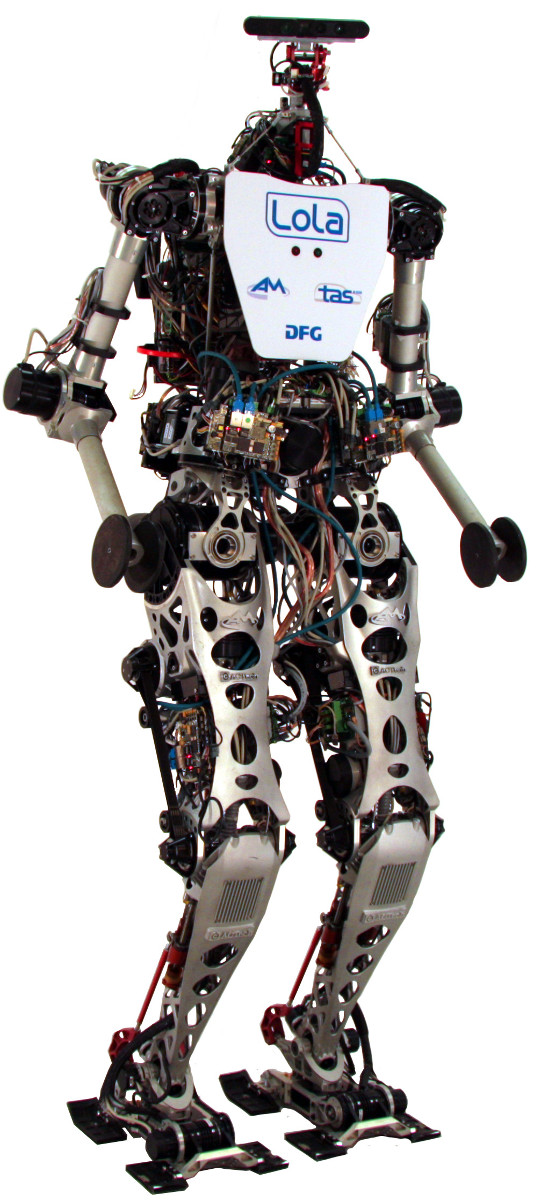

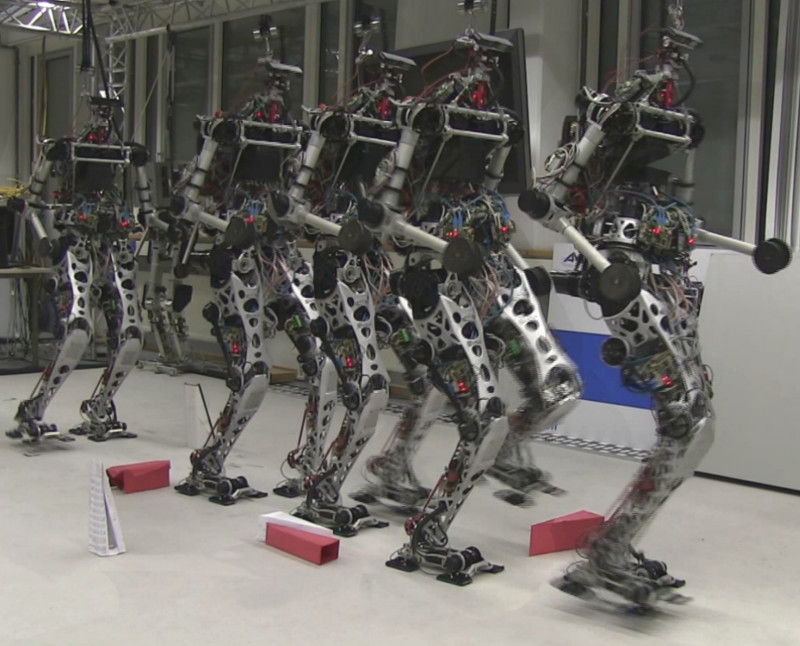

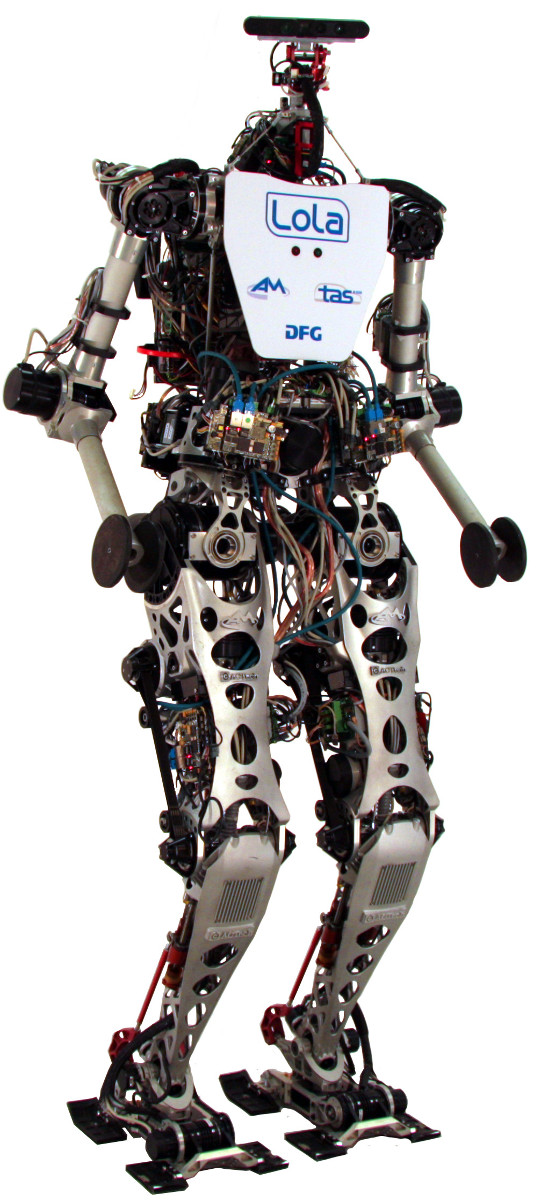

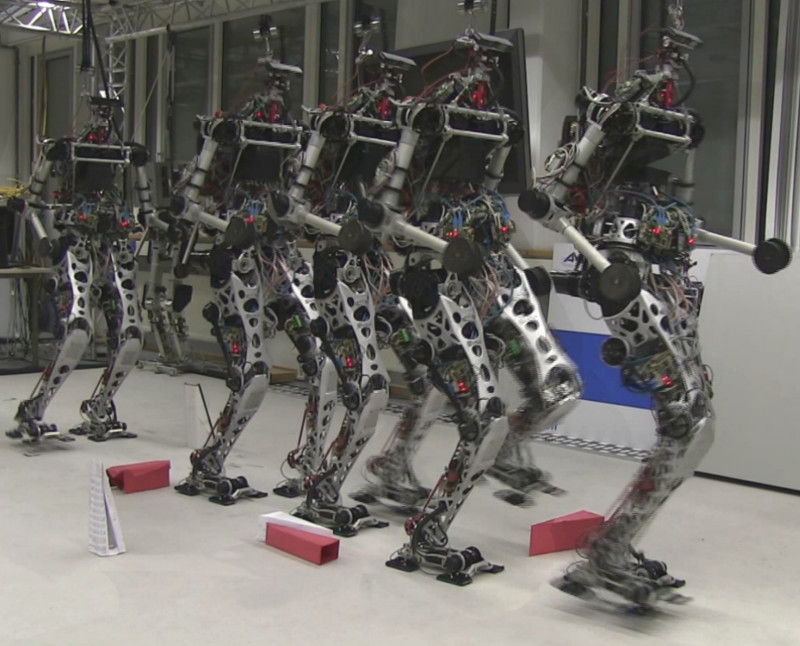

- Object detection and tracking for the humanoid Lola: Lola is the humanoid being developed at the Chair of Applied Mechanics (as shown in this demonstrative video). The main task of this project is to develop an object detection and tracking module that runs in parallel with the existing threads on Lola's operative system and detect a specific object class (e.g., a chair or a box) in the scene while keeping track of it. The goal is to allow the humanoid to follow the object moving around a scene through its navigation and locomotion modules.

|

|

- SLAM for the humanoid Lola: the idea of this project is to integrate a Simultaneous Localization and Mapping (SLAM) algorithm as an additional perception module within Lola's operative system. Through SLAM, we aim to reduce the drift currently present in the robot's kinematic system, as well as be able to obtain a 3D reconstruction of the environment necessary for the estimation of surfaces (in particular, walls) that are present in the surrounding environment.

The goal of this project is to handle the two main issues of mounting SLAM system on a robot:

(1) the vibration caused by the locomotion of robots which may lead to tracking-loss of the robot's current position, and

(2) the feature-less regions which do not have enough information for SLAM to perform localization.

|

More information about the LOLA project please refer to this

page.

If you are interested in any of these topics, please contact us via e-mail:

Shun-Cheng Wu

Federico Tombari