Active Vision in Interactive Spaces

Diploma Thesis

Active Vision goes beyond plain sensing technology and includes strategies for observation. Rather than just processing snapshots, the observer and sensor continually interact to purposefully analyze visual sensory data and answer specific questions posted by the observer. Subject of this thesis is the exploitation of the Active Vision paradigm in interactive spaces. A particular example for such a space is the eXperience Induction Machine (XIM). The human accessible mixed reality space is run at the Institut Universitari de l'Audiovisual (IUA) in Barcelona to enable research applications in the field of mixed reality using biologically inspired models of sensor and effector systems.

The eXperience Induction Machine

The interactive space XIM is equipped with a number of sensors and effectors to provide the context for a variety of application scenarios. To interact with its visitors, it features 72 light emitting floor tiles, which also serve as sensors. Their weight information complements the visual data provided by an infrared camera installed in the ceiling in order to track objects in the room. Inside the space, the visitor is surrounded by projection screens, exposing him to interactive content. Eight movable theater lights can be used for light effects and indication of certain spots, while four wall mounted movable pan-tilt cameras (gazers) may be used to gaze at certain spots and provide an online image.

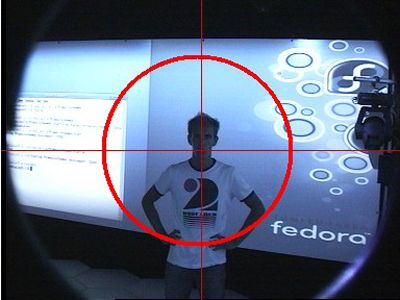

In this environment, a major challenge lies in the tracking of people in the space. A multi-modal tracking system (MMT) is used to fuse the input of multiple sensors and maintain a model of the space in real time. The specific Active Vision task examined in this thesis is the use of the four gazers to gain additional information about a person located at a position signalized by the tracking system. This additional acquired information would be helpful, especially if tracking data can not be unambiguously assigned to a specific object contained in the model. Since this deployment of the gazers presupposes an adequate calibration of the individual devices, this project emphasizes different calibration techniques and evaluates their strengths and weaknesses.

Classifier Based Extrinsic Calibration

This thesis introduces an innovative approach to determine the extrinsic parameters of a movable camera, i.e. a gazer in the XIM. These parameters are necessary to compute the adequate pan and tilt angles to adjust the device to look at a specific spot. To keep the calibration as independent as possible of any other means apart from the tracking system, the extrinsic parameters are computed from a set of correspondences between tracking positions and respective gazer angles. These correspondences are gained in an initial calibration scenario, in which the space is scanned with the gazers to determine where a person is located and what angles serve to look straight at him or her. In order to detect the person in the gazer image, a priory trained classifier is constantly applied to the image, giving an indication on how to adjust the gazer correctly. The position of the person in space is thereby delivered by the XIM infrared tracking system.

A sufficient number of correspondences allow the optimization of the desired camera parameters as an optimal fit to a system of non linear equations that can be set up from the matching pairs of tracking positions and gazer angles. This optimization is done by a non-linear Levenberg Marquard Optimizer, optimizing position and orientation of the gazer from a minimum of four correspondences. Rather than estimating the position of the gazer in relation to some predefined coordinate system, this setup yields the position in correspondence to the coordinate frame spanned by the tracking system. Keeping in mind that the tracking data is given in exactly this coordinate frame, this is of an enormous advantage.

Marker Based Pose Estimation

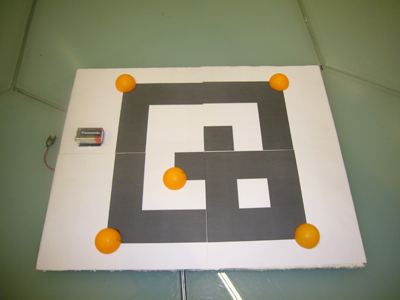

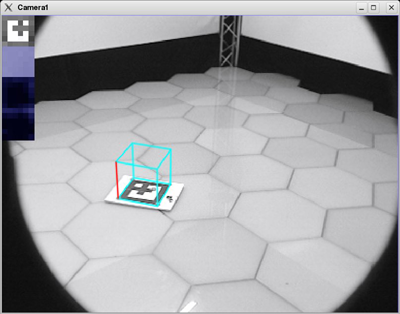

In order to judge on the performance of the classifier based calibration and the accuracy of the poses, the results are compared to those of a state of the art marker based calibration method. An interactive marker equipped with infrared LEDs allows the pose estimation of both the gazer and the infrared overhead tracking camera relative to the marker. In combination these poses allow the estimation of the gazer position and orientation relative to the overhead tracking system. The Ubitrack framework is used for intrinsic calibration of the cameras and the estimation of the individual poses. To compensate for errors in the marker detection, the pose estimation was done for several different marker positions. A bundle adjustment implemented in the Ubitrack framework serves to globally optimize the poses, taking all involved parameters and error sources into consideration.

Attribute Extraction

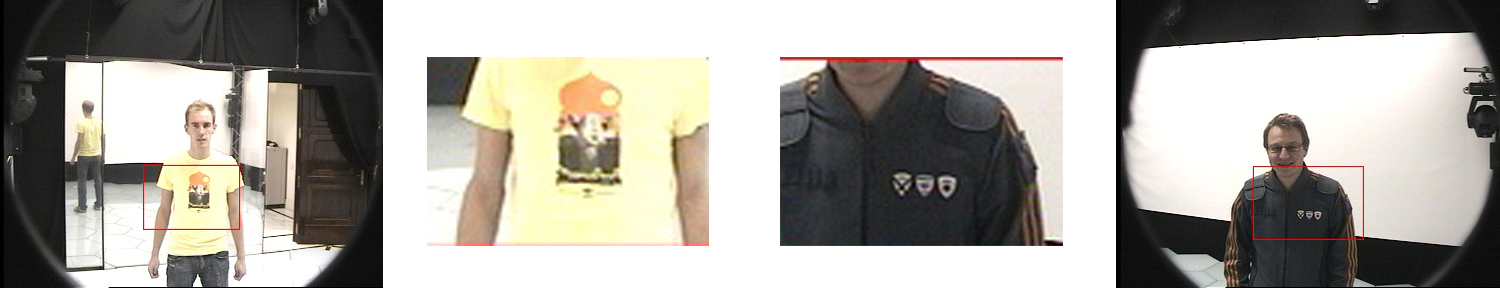

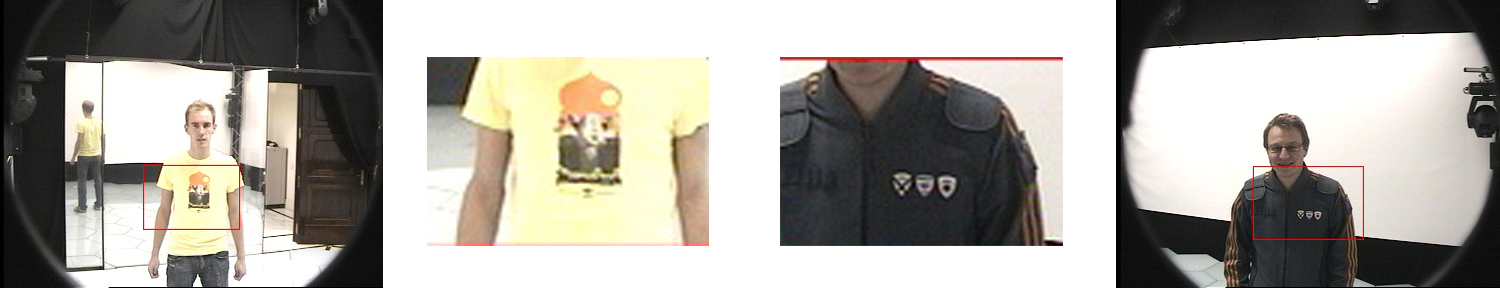

With the poses of the gazers known, the adequate pan and tilt angles can be computed online for any given tracking position. In communication with the multi modal tracking system, the gazers are triggered to look at a point of interest, once a tracked object stands by itself and is not moving. A region of interest is selected and extracted from the gazer image in respect of the distance between gazer and object. This image region will now serve as input for further processing.

A first approach of gaining unique information from this region of interest is the creation of a hue histogram with an arbitrary number of bins. In a test scenario, such histograms are generated for various images taken from the gazers looking at distinguishable persons standing at different positions in the room. In order to determine whether a hue histogram contains enough information to clearly differentiate between the persons, different mathematical approaches of histogram comparison are tested and evaluated.

Related Topics

Downloads